Payment systems have been put into practice by developing many measures against counterfeiting and unauthorized use with evolving technologies. EMV is one of them. It eliminated the possibility of duplicating cards in card-present transactions and allowed the card to be used in line with the offline media parameters.

You have several choices to choose from when deciding how best to fulfill PCI DSS requirements. To secure PCI DSS compliance with cardholder data, many organizations use a combination of network segmentation and encryption, tokenization, or other methods of obfuscation.

See Also: How Google Pay, Apple Pay, and Samsung Pay Protect Your Card Details

Each of these technologies offers its advantages and disadvantages, but one security method that will be extraordinarily effective in reducing PCI scope, minimizing risk, and simplifying PCI compliance is tokenization. It also helps you maximize the business use, agility, and flexibility of your data.

Unlike card-present transactions, tokenization is one method used to protect sensitive data in card-not-present transactions and similar new transaction types that require card information to be used, entered through interfaces, and moved between systems.

What is Tokenization?

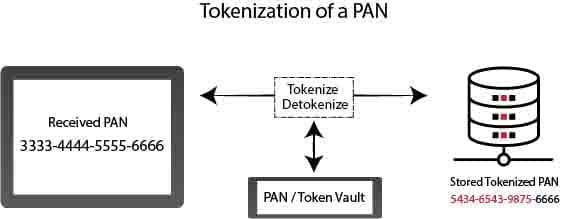

By definition, tokenization is the process of replacing sensitive data, in the case of card payments, with a different data called “token” within a specific algorithm, which cannot be used as the original in case of capture.

Tokenization is the expression of sensitive data with a random and unique value. By creating a token of information such as credit card information, identification number, this data is prevented from being used openly in any transaction. The security of sensitive data is ensured by using tokens instead.

Since the card is not physically used in card-not-present transactions, there may be situations such as violation of information or fraud. With tokenization, the card information is not disclosed to online transactions. Since there is a token representing the credit card, the card information is not stored in the operating system. The token travels securely between systems.

See Also: HSMs for PCI DSS Compliance

The token does not contain critical information, and sensitive data has been replaced with insignificant, random information. Even if the token data is captured, card information cannot be accessed through the token.

Many tokens can be created from a credit card and used securely in many systems. The token is flexible to use as it can be deleted and suspended at will. There is no need to enter information repeatedly in every transaction, and transactions can be performed with a single click. Card information is securely stored in systems called a vault.

Tokenization technology is the protection of sensitive data by replacing them with tokenized data. To put it simply;

- Tokenization is the replacement of sensitive information with tokenized information.

- Sensitive information is stored in encrypted form in the central tokenization system.

- Since all systems communicate via token, sensitive information will not be detected even if the network structure is intercepted.

- Credit card information will be stored only in tokenization systems and will be tokenized in other applications or database structures. In this context, the storage scope of PCI will be narrowed and will become more secure.

- Since the tokenization system includes authorization and switching structures, it is closed to unauthorized access. Unauthorized access attempts can be detected quickly and input to event management.

- With tokenization, clear-text credit card sharing can be prevented when sharing information with suppliers.

When talking about tokenization in payment systems, there are generally three types of usage: Acquiring Token, Issuer Token, and Payment Token.

By PCI DSS, SAD (Sensitive Authentication Data), i.e., CVV2, and password information cannot be tokenized. Therefore, only the card number should be taken into account when it comes to tokenization for the world of payments.

According to PCI, for the tokenization system to be considered safe, the probability of predicting PAN based on the token must be greater than one in a million. The best-known method for capturing tokenized data is called Rainbow Table.

What Does Tokenization Do?

Tokenization is done to prevent the data from being used if captured by unauthorized persons during transportation between environments. The tokenized data must be de-tokenized to be used in the target system it reaches. Therefore, if the tokenization is done with a cryptographic key, the cryptographic encryption process must be done with a reversible method.

See Also: How can you make stored PAN information unreadable?

Tokens that are not created with the cryptographic encryption method are de-tokenized by matching them in secure systems called “Data Vaults.”

At this point, it is useful to take a look at the tokenization methods and types:

Tokenization can be done in 2 types: Reversible and Irreversible.

Reversible tokens can be de-tokenized using a cryptographic key or by calling card information held in a database conjugated to the token with a function.

The irreversible tokens are of 2 types: authenticatable and non-authenticatable. Verifiable tokens can be created with one-way mathematical functions.

Token Separation by Format

Tokens that protect the format are the least effective in terms of ease of use, as fields in databases designed by card numbers can also be used for tokens without requiring changes.

In the tokenization type that preserves the format, it is essential to have systems to distinguish between tokens and real cards. Otherwise, it may be possible for card numbers to be perceived as tokens and to be exposed accidentally.

The token that preserves the format is similar in appearance to a credit card.

Card Number: 4111 1111 1111 1111

Token Protecting the Format: 4111 8765 2345 1111

Token, which does not preserve the format, is different from a credit card in terms of appearance. There is no similarity in terms of card number length and characters used.

Card Number: 4111 1111 1111 1111

Token Not Protecting Format: 25c92e17–80f6–415f-9d65–7395a32u0223

Token Types by Number of Uses

Single-Use Token: It is the token used for a single transaction. It is processed faster than a multi-use token. A new token is created for each transaction. For this reason, the token voile area is continuously expanding, and more space is needed. Creating and saving new tokens always may cause token conflicts.

Multi-Use Token: The same card information is used for many transactions. The same token is created in every situation made with the card. As an advantage, it enables data analysis and provides economical use of the vault area.

Tokenization Vs. Encryption

Encryption and tokenization are not the same things, although they are similar issues. While encryption is reversible, tokenization is irreversible. It is not mathematically possible to reach the original value in any way based on Irreversible tokens.

See Also: Encryption Key Management Essentials

Encryption is the hiding of data, hiding its visibility. Tokenization is the representation of data with a random expression that is not related to data. Encrypted data can be restored, but card information cannot be obtained from the token data created by the tokenization process by any mathematical operation.

Encryption is secure as long as the length of the key used and the difficulty of the encryption method used. In the Encryption method, the data is safe as long as it is encrypted, but it is usually necessary to decrypt the encryption and use open data for the payment to be made. Naturally exposed data can be exposed to attacks.

See Also: How do I Protect the Stored Payment Cardholder Data?

Encryption is modifying data with its encryption key not to be read by parties that do not have a decryption key. While the token is used in tokenization, the password is used in the encryption process.

The reversible token can essentially be considered partly the same as encryption. Both techniques are based on the same logic as the method. However, since the encryption is a general concept, it can be done with any key length, while the PCI compliant tokenization system must use a minimum 128-bit key (AES).

What are the PCI DSS Tokenization Requirements?

The PCI Security Standards Council has published some guidelines to evaluate the tokenization product used in PCI DSS compliance. The following list of PCI DSS requirements and procedures for tokenization schemes is taken directly from the PCI DSS Tokenization Guidelines’ official statement:

- Tokenization systems must not have primary account numbers (PANs) outside your strictly defined cardholder data environment in response to any program, device, network, or user (CDE). Your customers’ PANs should be kept completely confidential at all costs and must be seen by those who should see them. If any feature of your system can provide a PAN to any other component on demand, this dramatically increases PAN’s likelihood of leaking out and receiving unauthorized persons. PAN should not be allowed to leak out and fall into the hands of unauthorized persons.

- All parts of your tokenization system must reside on secure internal networks that are not tuned to meet PCI DSS requirements or are often isolated from other unreliable networks. They should be set up to reject all suspicious and unverified traffic. Appropriate techniques should be implemented, including segmenting your network from out-of-scope subnets, installing VPNs, or implementing network security strategies with zero security.

- Only trusted communications should be allowed to go inside or outside the CDE. All untrusted traffic must be denied, and security protocols must be in place to deal with such traffic when detected.

- If you plan to store cardholder information locally, with a robust and industry-tested encryption algorithm such as AES-256, you can encrypt it all. If you need to transmit information over open or public networks, it must also be encrypted with this algorithm type.

- As required by PCI DSS Requirements 7 and 8, all tokenization systems must have reliable and robust authentication and access control measures. Specifically, all access to cardholder data must be on a strictly known basis (PCI Requirement 7), and those given access to cardholder data must each have unique identifiers (PCI Requirement 8). Authentication and access control measures will make it easier to identify people who do not need data access.

- The tokenization system must meet general network configuration and security standards to protect against cyberattacks.

- As specified in PCI Requirement 3.1, all unnecessary cardholder data must be deleted from your systems at least once every three months. Your tokenization system must include a data retention policy that specifies a procedure for the deletion of data.

- The tokenization system should log and monitor all traffic passing through the network. Audit logs will make it easier to detect suspicious traffic. There should also be a procedure to alert network security experts to deal with suspicious traffic when detected.

Encryption is still a widely used and proven technology. Companies generally use this structure by risking hardware, software, and storage costs. Also, it increases the workload of system administrators and limits their mobility.

See Also: What Are the Ways to Reduce PCI Scope

Tokenization will become more known in the future. In particular, lowering costs and easy adaptation will increase the use of this technology in the future.

Tokenization captures payment card data before entering a merchant’s environment and then stores this data in a secure vault. This achieves two things: By removing the need to pay for the hardware, software, and internal systems needed to conduct network segmentation, it saves companies money and improves protection by making information unavailable to criminals and hackers. The only thing to be stolen in the case of a violation is useless tokens that hackers can not recover specific confidential data.

Besides, by storing sensitive cardholder data outside of your environment, you can effectively remove your systems that host this data once from the scope. Keeping sensitive cardholder data out of your environment simplifies the compliance process and shifts responsibility to PCI compliance and security professionals.

At this point, the choice is yours. However, keep in mind that encryption and tokenization structures can be used together to some extent.

For detailed information, you can review the PCI SSC Tokenization documents below.

PCI SSC Information Supplement: PCI DSS Tokenization Guidelines

PCI SSC Tokenization Product Security Guidelines – Irreversible and Reversible Tokens